Researchers at Technion-Israel Institute of Technology have developed a platform able to speed up the learning process of artificial intelligence (AI) systems 1,000 times over.

No one thinks that machines will completely take the place of human brains, but the most sophisticated computers can process data much faster than we can. AI is the simulation of human intelligence processes by machines, especially computer systems. These processes include learning (the acquisition of information and rules for using the information), reasoning (using rules to reach approximate or definite conclusions) and self-correction.

Many people have become nervous as computers become increasingly capable, yet even though they can pose dangers such as cyber attacks, the possibilities for benefiting humanity are immense. AI makes societies more efficient, opening new jobs, saving money and creating opportunities for revenue generation. They also save mankind from the very boring jobs that many have to do.

Computers are already creating smart homes that save energy and improving medical care by monitoring patients from afar. They will save lives currently lost in road accidents by driving cars for us more safely. AI can also expand human ingenuity and creativity. Since we can’t turn the clock back, it is advisable to take advantage of AI while keeping it in check.

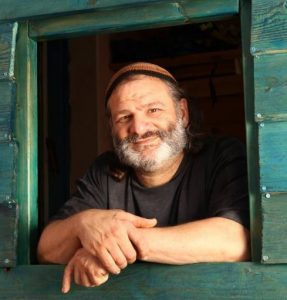

Prof. Shahar Kvatinsky and doctoral student Tzofnat Greenberg-Toledo – together with students Roee Mazor and Ameer Haj-Ali of Technion’s Andrew and Erna Viterbi Faculty of Electrical Engineering – recently published their research in the IEEE Transactions on Circuits and Systems journal, published by the Institute of Electrical and Electronics Engineers (IEEE).

In recent years, there has been major progress in the world of AI, mostly thanks to models of deep neural networks (DNNs), which are sets of algorithms inspired by the human brain that are designed to recognize patterns.

Inspired by human learning methods, these DNNs have had unprecedented success in dealing with complex tasks such as autonomous driving, natural language processing, image recognition and the development of innovative medical treatments. All this is achieved through the machine’s self-learning from a vast pool of examples often represented by images. This technology is developing rapidly in academic research groups, and leading companies such as Facebook and Google are utilizing it for their specific needs.

Learning by example requires powerful computing power and is therefore performed on computers that have graphic processing units (GPUs) suited for this job. But these units consume considerable amounts of energy, and their speed is slower than the required learning rate of the neural networks, thereby hindering the learning process.

“In fact, we are dealing with hardware originally intended for mostly graphic purposes; it fails to keep up with the fast-paced activity of the neural networks,” explained Kvatinsky. “To solve this problem, we need to design a hardware that will be compatible with deep neural networks.”

Kvatinsky and his research group have developed a hardware system specifically designed to work with these networks, enabling the neural network to perform the learning phase with greater speed and less energy consumption. “Compared to GPUs, the new hardware’s calculation speed is 1,000 times faster and reduces power consumption by 80%.”

This novel hardware represents a conceptual change; instead of focusing on improving the existing processors, they decided to develop the structure of a three-dimensional computing machine that integrates memory.

“Rather than split between the units that perform calculations and the memory responsible for storing information, we conduct both tasks within the memristor, a memory component with enhanced calculation capabilities assigned to work with deep neural networks.”

Although their research is still at a theoretical stage, they have already shown via simulation how it could be implemented. “Currently, our development is destined to work with the momentum learning algorithms, but our intention is to continue developing the hardware so that it will be compatible with other learning algorithms as well. We may be able to develop a dynamic, multi-purpose hardware which will be able to adapt to various algorithms, instead of having a number of different hardware components,” Kvatinsky concluded