Since the COVID-19 pandemic began, people from university lecturers and students to grandparents and grandchildren who have had to stay at home have been using Zoom Video Communications to learn and keep in touch.

Zoom is an American communications technology company founded in 2011 by Eric Yuan, a former Cisco engineer and executive who launched its software in 2013. It offers videotelephony and online chat services through a cloud-based, peer-to-peer software platform and is used for teleconferencing, telecommuting, distance education and just chatting.

But it really became popularly known and took off in the spring of 2020, when the new Coronavirus broke out around the world.

As many of Zoom’s employees are located in China, various people have worried about the risk of censorship and surveillance.

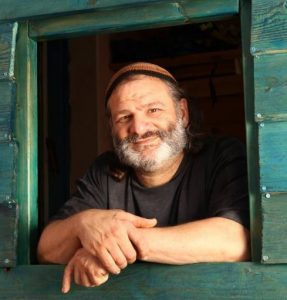

Now, researchers at Ben-Gurion University of the Negev (BGU) in Beersheba have shown that there is a basis for privacy worries. Video conference users should not post screen images of Zoom and other video conference sessions on social media, advises Dr. Michael Fire of the university’s software and information systems engineering department.

He and his team easily identified people from public screenshots of video meetings on Zoom, Microsoft Teams and Google. In April 2020, nearly 500 million people were using these online systems. While there have been many privacy issues associated with video conferencing, the BGU researchers looked at what types of information they could extract from video collage images that were posted online or via social media.

“The findings in our paper indicate that it is relatively easy to collect thousands of publicly available images of video conference meetings and extract personal information about the participants, including their face images, age, gender and full names,” warned Fire. “This type of extracted data can vastly and easily jeopardize people’s security and privacy, affecting adults as well as young children and the elderly.”

Their article, entitled “Zooming into Video Conferencing Privacy and Security Threats,” has just been published in ArZiv, a free distribution service and an open-access archive for 1,731,155 scholarly articles in the fields of physics, mathematics, computer science, quantitative biology, quantitative finance, statistics, electrical engineering and systems science, and economics. Materials on this site are not peer-reviewed.

The researchers reported that it is also possible to extract private information from collage images of meeting participants posted on Instagram and Twitter. They used image processing text recognition tools as well as social network analysis to explore the dataset of more than 15,700 collage images and more than 142,000 face images of meeting participants.

Artificial intelligence-based image-processing algorithms helped identify the same individual’s participation at different meetings by simply using either face recognition or other extracted user features like the image background. The researchers were able to spot faces 80% of the time as well as detect gender and estimate age.

Free web-based text recognition libraries allowed the BGU researchers to correctly determine nearly two-thirds of usernames from screenshots. The researchers identified 1,153 people who likely appeared in more than one meeting, as well as networks of Zoom users in which all the participants were coworkers. “This proves that the privacy and security of individuals and companies are at risk from data exposed on video conference meetings,” according to the team which also includes BGU SISE researchers Dima Kagan and Dr. Galit Fuhrmann Alpert.

Cross-referencing facial image data with social network data may cause greater privacy risk, they explained, as it is possible to identify a user that appears in several video conference meetings and maliciously bring together different information sources about the targeted individual.

“This type of extracted data can vastly and easily jeopardize people’s security and privacy both in the online and real-world, affecting not only adults but also more vulnerable segments of society, such as young children and older adults,” they wrote.

“A malicious user that gains access to video conferencing meetings can collect sensitive and private data on users, such as their names, usernames, images of their faces, samples of their voice, and even exposure to personal data that has been shared as part of the conversations. Moreover, using accessible deep-fake tools, a malicious user can attend video conferencing meetings under a false identity,” they continued.

“For example, one can use real-time, deep-fake tools in order to join meetings using celebrity avatars that make him or her look like celebrities, such as Barack Obama or Elon Musk. The participants’ personal data can later be used to jeopardize participants’ safety in both the virtual and the real-world.”

For example, previous researchers showed the threat of face recognition can be used to identify individuals both in the online and offline worlds; they used publicly available images from Facebook to identify students strolling through campus. They also showed that it is possible to predict personal and sensitive information from a face, such as the individuals interests, activities and even his or her social security number.

The BGU team made several recommendations to prevent privacy and security intrusions. These include not posting video conference images online or sharing videos; using generic pseudonyms like “iZoom” or “iPhone” rather than a unique username or real name; and using a virtual background vs. a real background since it can help fingerprint a user account across several meetings.

In addition, the researchers advise video conferencing operators to add to their platforms a privacy mode such as filters or Gaussian noise to an image, which can disrupt facial recognition while keeping the face still recognizable.

“Since organizations are relying on video conferencing to enable their employees to work from home and conduct meetings, they need to better educate and monitor a new set of security and privacy threats,” Fire said. “Parents and children of the elderly also need to be vigilant, as video conferencing is no different than other online activity.”